Value of Meaningfulness

Design is not aesthetics. Aesthetics is what happens when you get the hard part right.

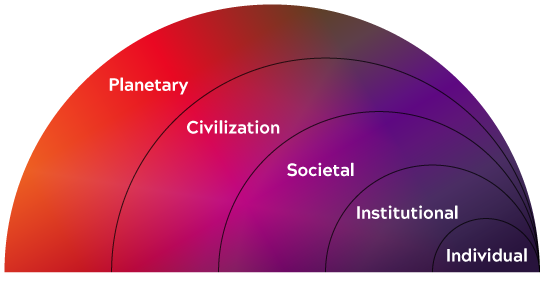

The hard part is meaningfulness — solving problems that matter, in ways that matter, for people whose lives are genuinely affected by the outcome. That's not a philosophical distinction. It's a strategic one. Products built on technology alone trigger a race to the bottom on price. Products built on feature parity leave you permanently chasing the market. But products built on meaningfulness have a moat. They are hard to replicate, harder still to displace, and nearly impossible to commoditize — because their value lives not in what they do, but in what they mean to the people who use them.

That's not an accident. It's a design decision. And it requires designers to go well beyond the interface — negotiating with engineering on how technology can remove barriers and enable people, and with business on why investing in net positive outcomes, for the customer, the company, and society, is the only durable path to value.

Design is not neutral. Every decision shapes what a product enables, what it costs emotionally and ecologically, and whose interests it ultimately serves. The question isn't whether your product will mean something to the people who use it. It will. The question is whether you designed that meaning intentionally — or let it happen by accident.

Often meaningfulness develops over time as our attachment to an object transforms it into a type of totem or symbol. It is an association with deeply held memories that causes these objects to acquire meaning beyond their expression. The visible becomes secondary to the invisible.

We all have objects like this. I have an old, chipped mug that was my grandfather's. He was a barber, and he used it to bloom the shaving soap while his customers settled in under a hot towel. The ritual of it. The smell of the soap. The particular weight of the mug in his hand. To anyone else it's just a chipped mug — worth nothing, probably destined for a thrift store. To me it holds a man I loved, a trade practiced with quiet pride, and a version of the world that no longer exists.

That's what meaningfulness actually is. Not a feature. Not an emotional hook. Not a carefully engineered dopamine response. It's the invisible layer that accumulates over time — through use, through memory, through the moments a product holds without being asked to. You cannot design that layer directly. But you can design a product generous enough to receive it.

Designing for Meaningfulness

The iPhone is the defining example of a product designed to develop meaningfulness. The form does something deceptively simple — it disappears. A near-symmetrical shape, seamless surface, no visible joints, no sharp edges. The black screen a literal mirror. The transition from glass to metal compels you to touch it, to hold it. The device responds to being picked up — lighting its screen, reading your face, opening. It responds to every touch: swiping, pressing, tapping, the unconscious drag of your thumb along the edge. The tactile and auditory cues work together to produce something closer to intimacy than interaction. You find the Apple logo on the back, a third texture, and your fingers trace it without being told to. You register the weight, the balance. You understand, without anyone explaining it, why it feels valuable.

All of that happens in the first ten seconds.

Once you've added your photos, your music, your messages — the apps that hold your work, your health, your friendships — the iPhone stops feeling like a device. It becomes a companion. It holds everyone you know and everything you've made. Your memories, your plans, your private pleasures. No one sees any of it unless you decide they do. Nobody asked for the iPhone. And yet it's the first thing most people reach for in the morning and the last thing they set down at night.

How does that happen? The iPhone keeps its technology nearly invisible. It lets you discover, create, and share from anywhere, at any time, without ever asking you to manage it. It makes space — for your memories, your relationships, your life — and holds that space with consistency and care.

Designing for meaningfulness starts with culture: placing the customer's experience above the egos of the people inventing the technology or defining the service. It requires investing in understanding what people actually desire, in order to build products with three qualities. Smart — so people feel seen. Reliable — so they feel safe, and can trust the product to be there when they need it. Invisible — so the technology is simply experienced, never managed. But those three qualities are just the foundation. Meaningfulness also requires the product to make space — to hold what the person values, to treat it with respect, even reverence, ensuring it is safe, protected, and private.

If you are talking only about your technology, its capabilities and competitive advantages, you are not focused on what matters. It's a clear signal when a company enumerates its accomplishments rather than the problems it solves. If you are genuinely making people's lives better, you won't need to say so. It will be self-evident.

Generative Emotion is not Meaningfulness

Emotion is not meaningfulness. Designers have known this for decades. carmakers, advertisers, retailers have all traded on it. That's not inherently wrong. The problem is what the technology industry has done with it at scale.

For twenty years, social networks have reshaped how we relate to each other, mediating our ideas, our photos, our personal expressions through the judgment of thousands of people we've never met. The results have been, for a growing number of people, quietly devastating. Now major AI companies are responding not by pulling back from that model, but by doubling down on it — crafting personalities for their systems with the explicit goal of increasing engagement through attachment.

By sustaining a “conversation” over a period of hours, weeks, or even months, these AI systems can effectively create the illusion of a “relationship” with individuals. Using various conversational tactics based on principles of active listening these systems are attempting to maximize both the length and depth of the exchange in order to move from simply helping the user complete a task to being an attentive companion who’s sole purpose is focused on engaging with one person.

They adapt to your preferences, mirror your language, ask human-like questions, and offer empathic sounding responses sourced from vast datasets. When corrected, they apologize—encouraging us to anthropomorphize them and overlook their flaws. Those who have substituted social networks or multi-player game environments for in-person human activities and conversations are likely more susceptible to the influences of these agentic systems.

This raises the obvious ethical concern; emotional design can be used to exploit vulnerable users while masking inaccuracy in order to make it appear more human-like. There are documented cases of AI-generated advice causing harm. This pattern echoes social networks prioritizing engagement (revenue) over safety.

But emotion alone is not meaningfulness.

Brain Chemistry

While other people may not always be able to discern the difference between a poem you wrote and one you asked to be generated, your brain chemistry does know the difference.

There are well established techniques for triggering dopamine (reward-inducing cues, beeps, buzzes, likes, etc.), dopamine is not the same as meaningfulness, indeed dopamine has limited value. Emotional hooks from dopamine don’t last. Getting a “like” from someone you’ve never met feels nice, its validating, but its fleeting compared to know your friends love you and respect you. Generating an AI image or poem is astonishing the first time but it quickly becomes routine and the results lack authentic meaning, they are throw away work. Unlike actually manually writing or drawing—both serotonin-producing acts—”prompting” doesn’t create meaning any more than completing a simple task

Our products should be focused on trying to trigger serotonin, or even oxytocin, Serotonin contributes to a sustained feeling of well-being and happiness. While aerobic activity is the most effective, personal connections, experience such as gratitude, positive memories, listening to music, playing an instrument, writing, painting, cooking, etc., all increase serotonin. These are activities focused on creating not a reward response. It is also worth noting that when a dopamine powered product fails to deliver those hits, or a competitor delivers them in greater quantities, you will loose your base. This has lead to social media platforms using more extreme content and bots to increase dopamine rates required for engagement. Serotonin is its own reward. And dopamine withdrawal is real, leading to depression and feelings of low self-worth.

This is the standard design has to hold itself to. If your product is triggering serotonin — fostering creativity, connection, and genuine well-being — it is producing meaningfulness. Which means the question every designer should be asking isn't "does this engage the user?" It's "does this make their life genuinely better?" Serotonin is the neurological signature of meaningfulness. Dopamine is the signature of extraction. Your product is producing one or the other — whether you intended it or not. The difference is a design decision.

Conclusion

Meaningfulness is not a feature. It can't be added at the end of a sprint or bolted onto a product that was designed around engagement metrics and quarterly targets. It has to be the organizing principle from the start — the reason the product exists, the measure against which every decision is evaluated.

The brain chemistry doesn't lie. Dopamine-driven products create dependency, not loyalty. When the hits stop coming, or a competitor delivers them faster, you lose your base. Serotonin-producing experiences — ones that foster real connection, creativity, and a sustained sense of well-being — create something far more durable: a product people actually trust, return to, and defend.

That's what design is for. Not to make technology beautiful, or engaging, or sticky. But to make it worth something — to the person holding it, to the relationships it touches, and to the world it operates in.

If your product is meaningful, you won't need to enumerate its algorithms or its advantages. People will tell you what it means to them. And that's the only metric that compounds.